The Future. Google is getting into the virtual try-on fray with a feature that leverages generative AI to see how a garment would look on various diverse models. That’s quite different from most virtual try-on tools, which try to use AR or body scanning to help customers see a garment on them. But Google’s tool may give users a better sense of the real-world fit… and help curb costly returns.

Generative fit

Google hopes to make virtual try-on tech a little more of a natural fit with its new search feature.

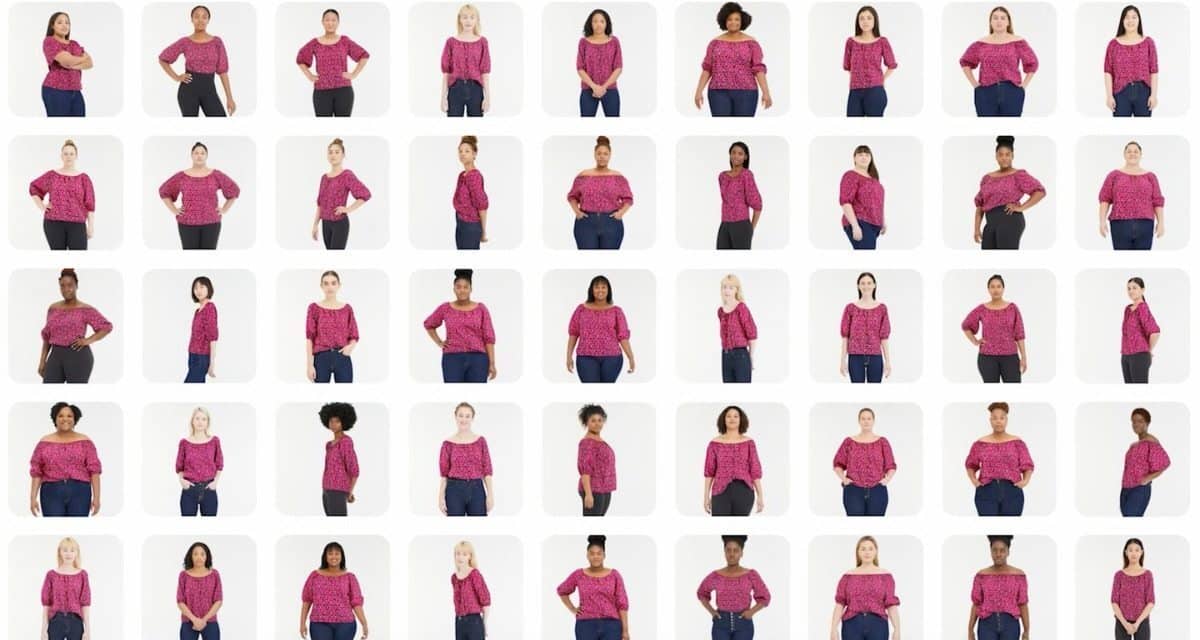

- When available, users can click a “try on” label to select among 40 models of various sizes, body shapes, skin tones, and ethnicities (women only, but men soon) to see a particular garment.

- Generative AI, using a technique called diffusion, then blends the model and the article of clothing.

- That allows the users to see how the garment will “drape, fold, cling, stretch and form wrinkles and shadows” on the model.

The feature could be a boon for both Google and clothing brands — Google is on a warpath to make its classic search feature unique and relevant amid the rise of AI chatbots like ChatGPT. Meanwhile, brands routinely rely on Google Search to drive sales. Call it the SEO clothing rack.

The feature won’t cost any extra for brands — they just need to send in the same model/clothing photo they would for e-commerce listings. Google will do the rest. H&M, Anthropologie, Everlane, and Loft are already on board… and you can expect many more to follow soon.

TOGETHER WITH CANVA

No design skills needed! 🪄✨

Canva Pro is the design software that makes design simple, convenient, and reliable. Create what you need in no time! Jam-packed with time-saving tools that make anyone look like a professional designer.